I am a Brain & AI Scientist at University College London, and a Scientific Programmer at Donders Institute. I am interested in how the Human Brain creates Hallucinations and learns Uncertainty, both huge problems in AI Safety. I want to leverage my unique perspective to help overcome these challenges. I currently lead two multi-year experimental brain scanning studies (7T fMRI and MEG) and one computational modelling study (Deep Neural Networks, Bayesian Modelling) to investigate how human neural networks create false beliefs (hallucination) and calculate uncertainty about its own internal states (introspection) - leading to multiple peer-reviewed articles and high-profile talks. I have professional experience evaluating and training LLMs at Google Assistant, and developed PCNportal, a collaborative open-source Machine Learning app for big data brain analysis.

Humans and LLMs are both interrogable black boxes. I want to use my scientific expertise to make LLMs become more accurate in their thinking, through experimental design and computational modelling of their internal representations.

Download my resumé .

- Mechanistic Interpretability

- Uncertainty Quantification

- Science of Hallucinations

- Computational Neuroscience

PhD in Cognitive Neuroscience, 2026

University College London

MSc in Neuroscience, 2021

King's College London

BSc in Artificial Intelligence, 2019

Utrecht University

Research & Projects

Explore my work in Neuroscience and Engineering

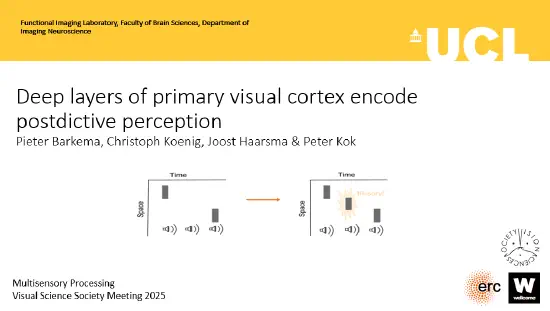

Postdictive Perception in Primary Visual Cortex

A 7T fMRI study investigating how the human brain reconstructs sensory reality after the fact.

Cross-Category Information (CCI)

A novel statistical framework to quantify informative variance in cortical noise using manifold learning.

PCNportal: Scalable Normative Modelling

A full-stack ML platform for brain-mapping using 10,000+ scans across 100+ global sites.

Experience

Public Speaking & Presentations

Discussing Neuroscience and AI at global venues

Family Talk Royal Institution

One-hour ticketed Public Talk for 200+ people on how the brain creates our reality.

Layer-specific fMRI of Postdictive Perception at 7T

Presenting high-resolution 7T fMRI data on feedback loops in the human visual cortex.